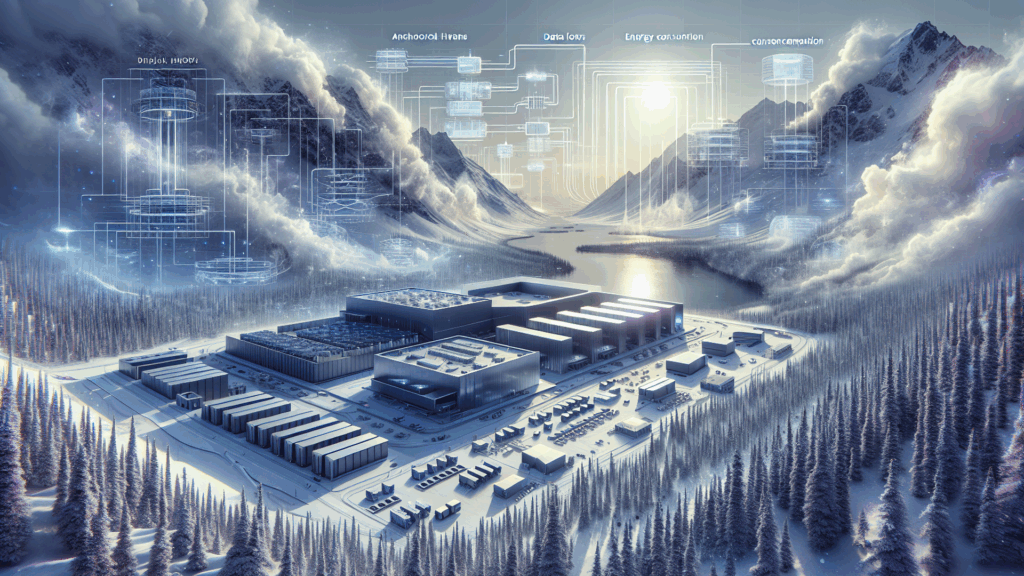

Anchorage is waking up to AI-era data center growth and moving to lock in stricter rules for “energy‑intensive facilities.”

The proposed ordinance ties new data centers to proof of sufficient grid capacity, water, sewer, and fire protection, which directly impacts how large GPU clusters can realistically be deployed there.

This is happening against a backdrop of U.S. data center power use projected to jump from ~4% to 12% of national electricity by 2028, with GPUs and cooling as the main drivers.

Water use and cooling are front and center, with U.S. data centers already consuming tens of billions of gallons annually, which will constrain designs in a resource‑sensitive region like Alaska.

Natural gas currently supplies 40% of data center power, and Anchorage is explicitly linking its data center policy to securing long‑term gas supply, signaling a fossil‑heavy near‑term AI infra path.

Compared to hubs like Virginia or Texas, Alaska is still a small player with only seven data centers, but this policy debate will shape whether it can attract hyperscale or AI‑specific builds.

The piece is worth a read for operators tracking how local permitting, grid readiness, and fuel politics are starting to gate where and how new AI data centers get built.

Source: Questions surrounding data center development arrive in Anchorage